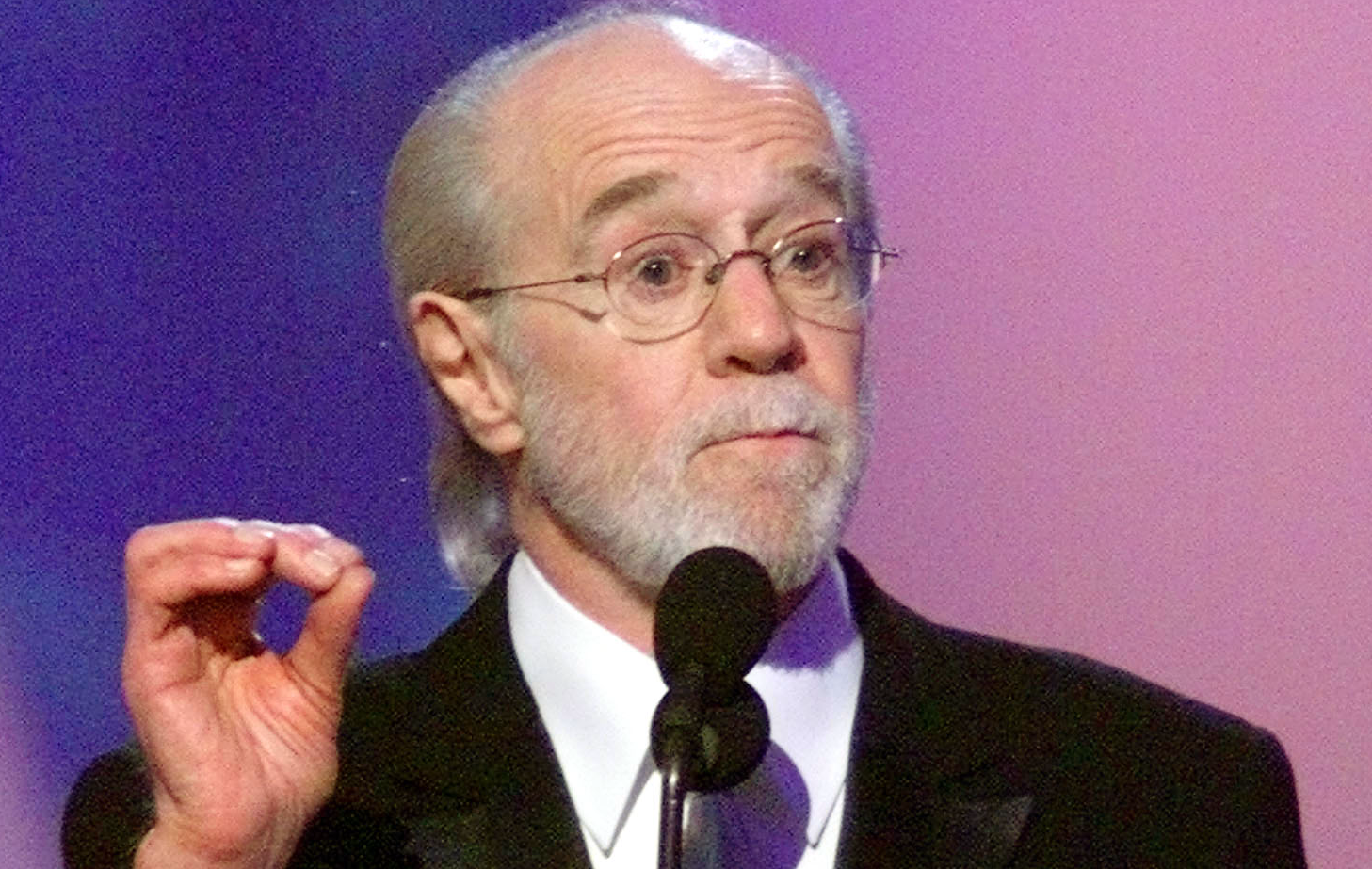

Comedian George Carlin died in 2008, but he’s back for an hour-long special, “George Carlin: I’m Glad I’m Dead,” which recently dropped on YouTube. It was the work of a comedy duo employing deepfake technology to bring Carlin’s work back to life.

The artist’s daughter, Kelly Carlin, who manages her late father’s estate and did not grant permission for the fake Carlin special, responded angrily on X: “My dad spent a lifetime perfecting his craft from his very human life, brain and imagination. No machine will ever replace his genius.”

Kelly Carlin is exploring legal action, and she’s far from alone in questioning the unauthorized use of one’s likeness or work. You’ll recall that AI became a crucial bargaining point in the actors’ and writers’ strikes last year. Unauthorized use of their likenesses and writing styles by the studios was chief among their concerns. The agreements struck with the studios generally allow for AI tools to be used with appropriate compensation for union members.

It’s unclear what legal avenue the Carlin estate could pursue. Parody is well-protected by the First Amendment and the deceased generally don’t have privacy rights under US law. A better question perhaps is whether the underlying technology was illegally trained on Carlin’s material — part of a broader copyright battle between copyright holders and AI developers that we’ve discussed at length in this newsletter.

Court action aside, Kelly Carlin plans to meet with SAG-AFTRA and help them lobby Congress for better protections for the dead.