Faced with future threats, planners sometimes make the mistake of preparing to fight the last war. How to avoid that problem in meeting the challenge posed by “fake news?” It helps to take a hard look at how these threats are evolving. Three thoughts:

We are all Russians now – Suspected Russian interference in the 2016 US presidential election continues to generate headlines, but new evidence suggests the injection of fake news into the US national bloodstream is increasingly a domestic phenomenon.

Before last week’s midterm elections, US political activists on the left and right were aggressively using Facebook and Twitter to spread false information. Both companies say they found and took down hundreds of sites in recent weeks created by Americans, some with hundreds of thousands of followers, which deliberately promoted disinformation.

There’s nothing new about dirty tricks and party propaganda in US elections, and some content creators may be motivated more by profit than a desire to shape public opinion for political gain. But these increasingly sophisticated campaigns of misinformation appear directly inspired by Russian tactics.

Russian interference revealed a set of tools that can be easily used to sway or cast doubt over the outcome of elections. Even as users become more discerning about fake news, more actors will develop the means to deceive.

Supply follows demand –A new study published by BBC World Service finds that in India, distrust of mainstream media is leading people to spread information from alternative online sources without attempting to verify whether the news is true. The study also found that “a rising tide of nationalism in India” is poisoning political debate. “In India facts were less important to some than the emotional desire to bolster national identity,” wrote the study’s authors.

The urge to support favored political figures and parties, to denigrate others, and to promote a particular vision of India and its perceived enemies has led citizens both to supply fake news and to accept it as true without any serious attempt at verification. Of course, this problem has less to with Indian nationalism than with human nature. It’s a growing problem everywhere.

This isn’t simply a story about bad people trying to deceive honest citizens. The human tendency to believe what we want to believe increases the appeal of falsehood and creates demand for more.

“Deep Fakes” are becoming more sophisticated – We’ve raised this alarm before and surely will again: Fake video is becoming more sophisticated. In particular, face-mapping technology, designed to improve television language dubbing, is making it easier to create video that appears to show a person saying or doing something they never actually said or did.

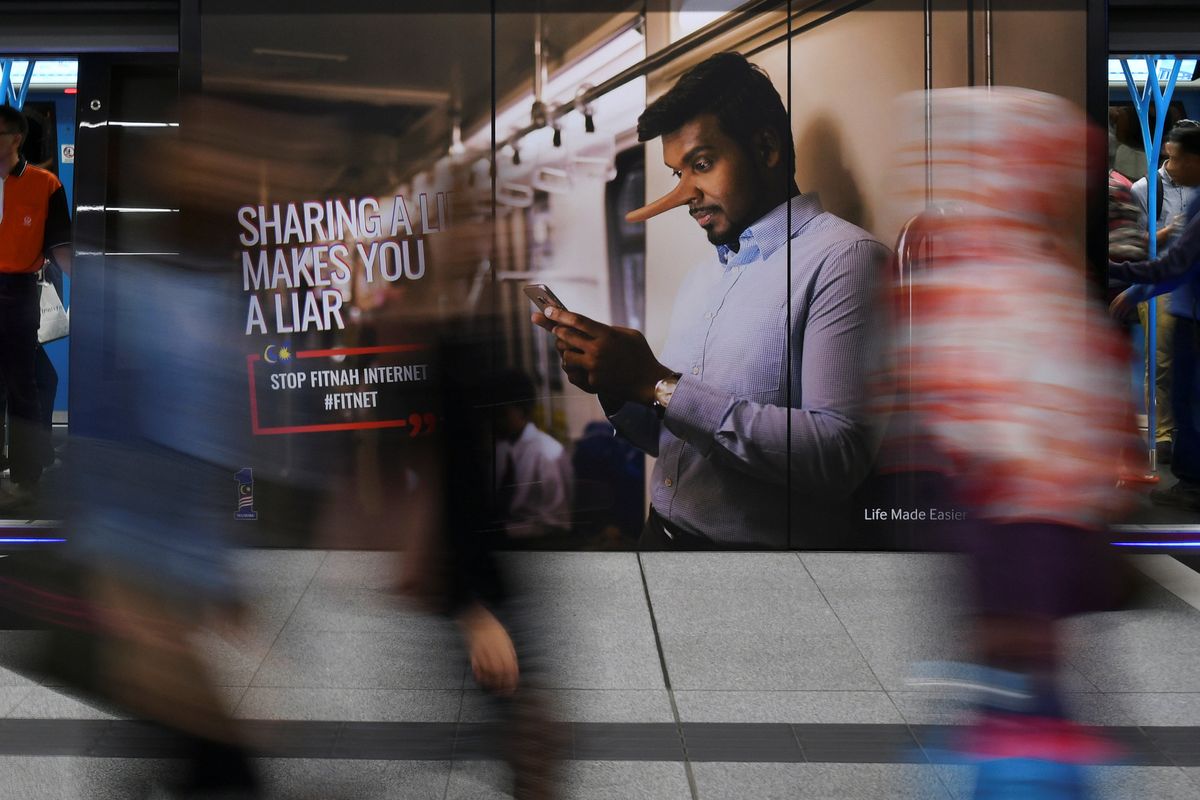

Experts in these technologies point out that AI algorithms can quickly identify phonies, and that so-called deep fakes—manipulated video—add only at the margins to an already serious fake news problem. But AI algorithms are useful only for those who want to know the truth, and video fakes can have a more emotional impact than written ones for the same reasons that a single provocative image can be emotionally more powerful than a thousand words.

The takeaway: Combine the increasingly commonplace nature of systematic disinformation, a natural desire to believe in it, and a persuasive manufactured image, and fake news is becoming a more complex and dangerous problem.