Posts

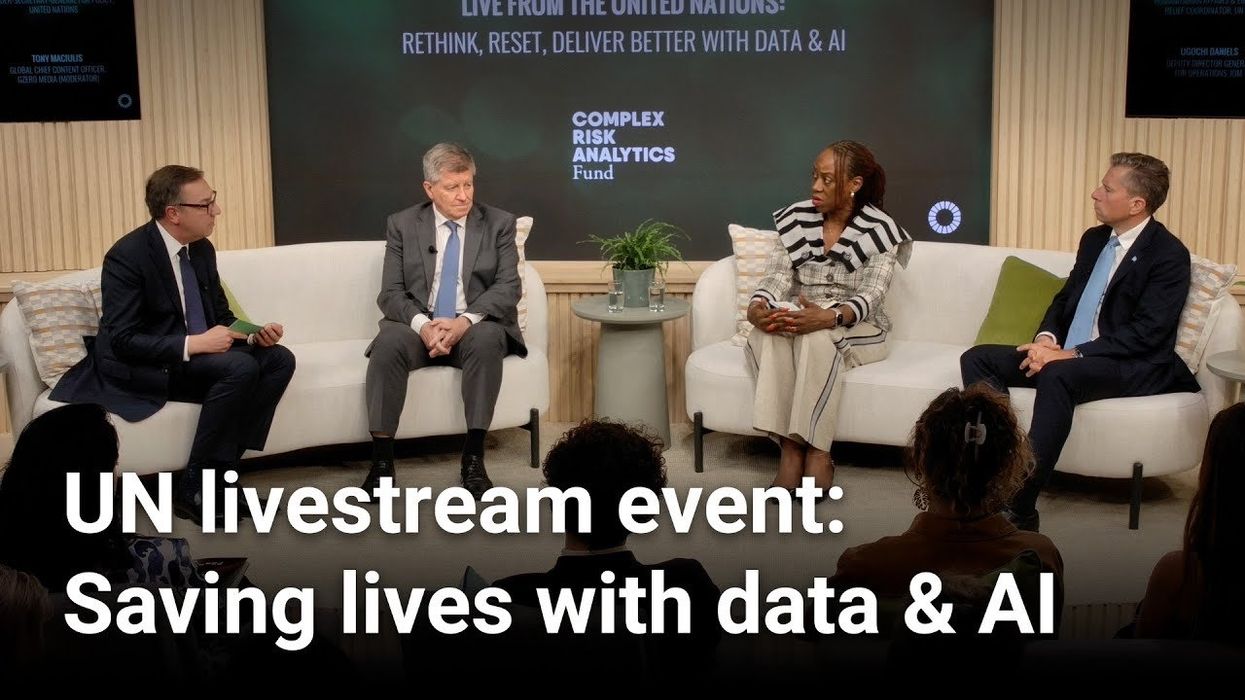

Watch today's Global Stage live premiere from the UN on 'The AI Divide'

Watch a recording of today's live premiere of our Global Stage panel, “The AI Divide: From Warning to Action,” where we convened a panel of experts and policymakers at the United Nations to discuss AI equity and responsible deployment.

Mar 19, 2026