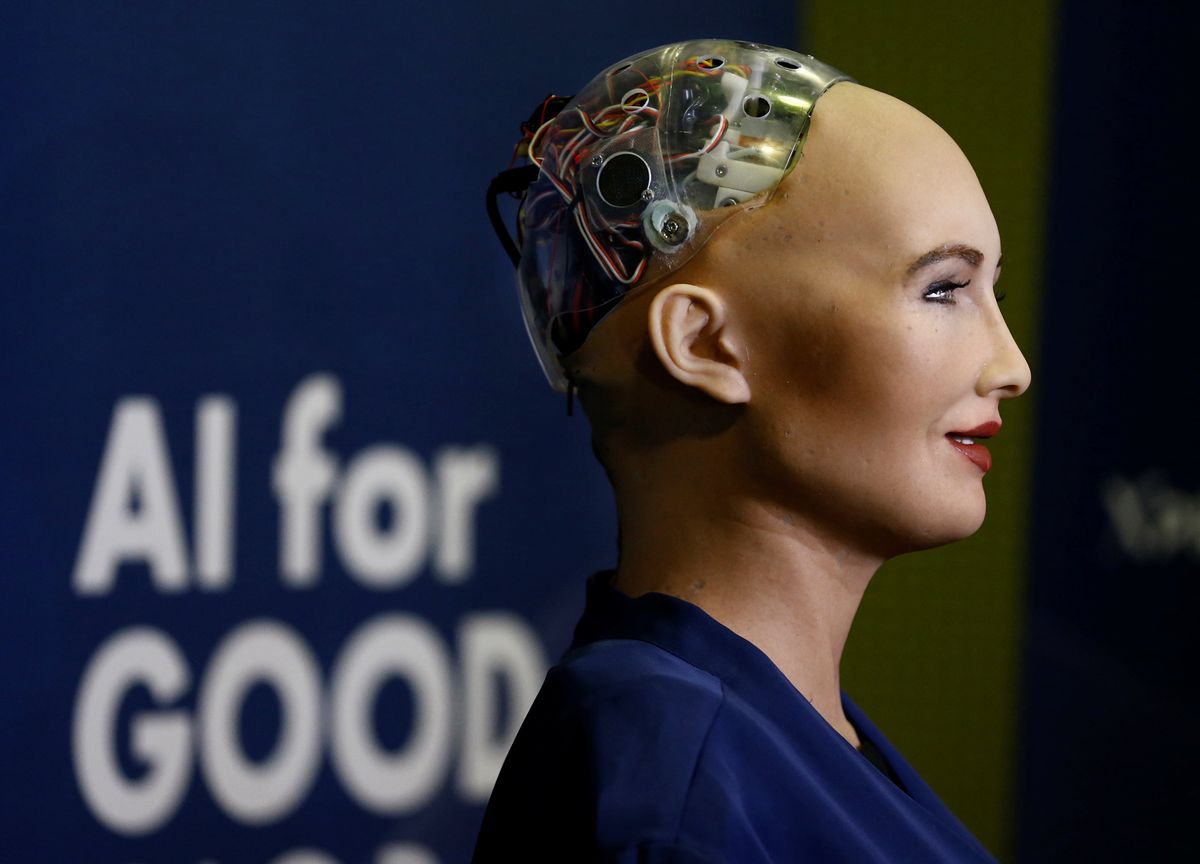

The drumbeat for regulating artificial intelligence (AI) is growing louder. Earlier this week, Sundar Pichai, the CEO of Google's parent company, Alphabet, became the latest high-profile Silicon Valley figure to call for governments to put guardrails around technologies that use huge amounts of (sometimes personal) data to teach computers how to identify faces, make decisions about mortgage applications, and myriad other tasks that previously relied on human brainpower.

"AI governance" was also a big topic of discussion at Davos this week. With big business lining up behind privacy and human-rights campaigners to call for rules for AI, it feels like the political ground is shifting.

But agreeing on the need for rules is one thing. Regulating a fuzzily-defined technology that has the potential to be used for everything from detecting breast cancer, to preventing traffic jams, to monitoring people's movements and even their emotions, will be a massive challenge.

Here are two arguments you're likely to hear more often as governments debate how to approach it:

Leave AI (mostly) alone: AI's enormous potential to benefit human health, the environment, and economic growth could be lost or delayed if the companies, researchers, and entrepreneurs working to roll out the technology end up stifled by onerous rules and regulations. Of course, governments should ensure that AI isn't used in ways that are unsafe or violate people's rights, but they should try to do it mainly by enforcing existing laws around privacy and product safety. They should encourage companies to adopt voluntary best practices around AI ethics, but they shouldn't over-regulate. This is the approach that the US is most likely to take.

AI needs its own special rules: Relying on under-enforced (or non-existent) digital privacy laws and trusting companies and public authorities to abide by voluntary ethics guidelines isn't enough. Years of light-touch regulation of digital technologies have already been disastrous for personal privacy. The potential harm to people or damage to democracy from biased data, shoddy programming, or misuse by companies and governments could be even higher without rules for AI. Also, we don't really know most of the ways AI will be used in the future, for good or for ill. To have any chance of keeping up, governments should try to create broad rules to ensure that whatever use it is put to, AI is developed and used safely and in ways that respect democratic principles and human rights. Some influential voices in Europe are in this camp.

There's plenty of room between these two poles. For example, governments could try to avoid overly broad rules while reserving the right to step in in narrower cases, like regulating the use of facial recognition in surveillance, say, or in sensitive sectors like finance or healthcare, where they see bigger risks if something were to go wrong.

This story is about to get a lot bigger. The EU is preparing to unveil its first big push for AI regulation, possibly as soon as next month. The Trump administration has already warned the Europeans not to impose tough new rules on Silicon Valley companies. China – the world's other emerging AI superpower – is also in the mix. Beijing's ambitious plans to harness AI to transform its economy, boost its military capabilities, and ensure the Communist Party's grip on power have contributed greatly to rising tensions between Washington and Beijing. But China is also working on its own approach to AI safety and ethics amid worries that other countries could get out ahead in setting the global agenda on regulation.

As with digital privacy, 5G data networks, and other high-tech fields, there is a risk that countries' competing priorities and intensifying geopolitical rivalries over technology will make it hard to find a common international approach.