GZERO World Clips

Why Big Tech companies are like “digital nation states”

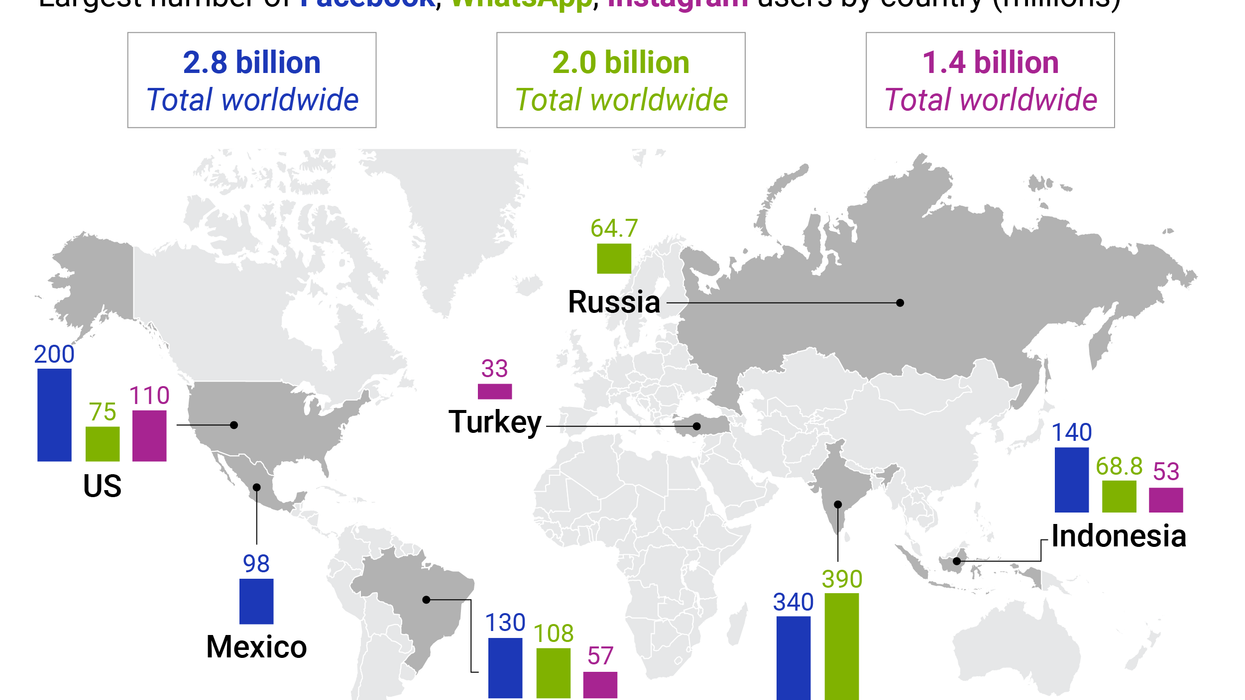

No government today has the toolbox to tinker with Big Tech – that's why it's time to start thinking of the biggest tech companies as bona fide "digital nation states" with their own foreign relations, Ian Bremmer explains on GZERO World. Never has a small group of companies held such an expansive influence over humanity. And in this vast new digital territory, governments have little idea what to do.

Oct 28, 2021