Hard Numbers

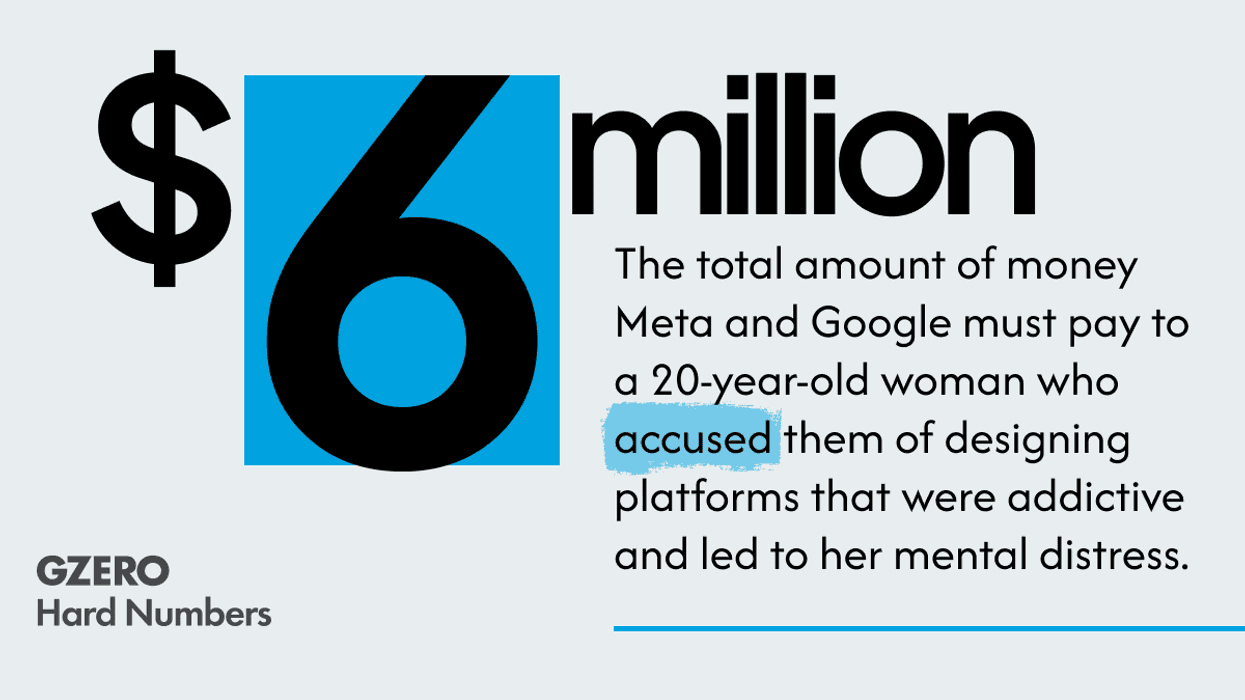

Jury finds social media giants negligent in landmark trial

On Wednesday, a jury found the tech giants liable for designing platforms – Instagram and YouTube – that are harmful to young people, a landmark verdict outcome that could open up social media companies to more lawsuits over users’ mental health.

Mar 26, 2026