VIDEOSGZERO World with Ian BremmerQuick TakePUPPET REGIMEIan ExplainsGZERO ReportsAsk IanGlobal Stage

Site Navigation

Search

Human content,

AI powered search.

Latest Stories

Sign up for GZERO Daily.

Get our latest updates and insights delivered to your inbox.

Puppet Regime is up for a Webby Award!

VOTE HERE

Economy

Global economy news and analysis from GZERO Media

Presented by

Ian Bremmer's Quick Take: Hi, everybody. Ian Bremmer here. A happy Monday to you. And a Quick Take today on artificial intelligence and how we think about it geopolitically. I'm an enthusiast, I want to be clear. I am more excited about AI as a new development to drive growth and productivity for 8 billion of us on this planet than anything I have seen since the internet, maybe since we invented the semiconductor. It's extraordinary how much that will apply human ingenuity faster and more broadly to challenges that exist today and those we don't even know about yet. But I'm not concerned about the upside in the sense that a huge amount of money is being devoted towards those companies. The people that run them are working as fast as they humanly can to get better and to unlock those opportunities and to also beat their competitors, get there faster. I'm worried about what happens that is more challenging, that we're not spending the resources on the consequences that will be more upsetting for populations from artificial intelligence. The ones that will require some level of government and other intervention or else. And I see four of them.

First is disinformation. We know that AI bots can be very confident and they're also frequently very wrong. And if you can no longer discern an AI bot from a human being in text and very soon in audio and in videos, then that means that you can no longer discern truth from falsehood. And that is not good news for democracies. It's actually good news for authoritarian countries that deploy artificial intelligence for their own political stability and benefit. But in a country like the United States or Canada or Europe or Japan, it's much more deeply corrosive. And I think that this is an area that unless we are able to put very clear labeling and restrictions on what is AI and what is not AI, we're going to be in very serious trouble in terms of the erosion of our institutions much faster than anything we've seen through social media or through cable news or through any of the other challenges that we've had in the information space.

Secondly, and relatedly, is proliferation. Proliferation of AI technologies by either bad actors or by tinkerers that don't have the knowledge and are indifferent to the chaos that they may sow. We today are in an environment with about a hundred human beings that have both the knowledge and the technology to deploy a smallpox virus. Don't do that, right? But very soon with AI, those numbers are going way up. And not just in terms of the creation of new dangerous viruses or lethal autonomous drones, but also in their ability to write malware and deploy it to take money from people or to destroy institutions or to undermine an election. All of these things in the hands not just of a small number of governments, but individuals that have a laptop and a little bit of programming skill is going to make it a lot harder to effectively respond. We saw some of this with the cyber, offensive cyber scare, which then of course created a lot of security and big industries around that to respond and lots of costs. That's what we're going to see with AI, but in every field.

Then you have the displacement risk. A lot of people have talked about this. It's a whole bunch of people that no longer have productive jobs because AI replaces them. I'm not particularly worried about this in the macro setting, in the sense that I believe that the number of jobs that will be created, new jobs, many of which we can't even think about right now, as well as the number of existing jobs that become much more productive because they are using AI effectively, will outweigh the jobs that are lost through artificial intelligence. But they're going to happen at the same time. And unless you have policies in place that help retrain and also economically just take care of the people that are displaced in the nearest-term, those people get angrier. Those people become much more supportive of anti-establishment politicians. They become much angrier and feel like their existing political leaders are illegitimate. We've seen this through free trade and hollowing out of middle classes. We've seen it through automation and robotics. It's going to be a lot faster, a lot broader with AI.

And then finally, and the one that I worry about the most and it doesn't get enough attention, is the replacement risk. The fact that so many human beings will replace relationships they have with other human beings. They'll replace them with AI. And they may be doing this knowledgeably, they may be doing this without knowledge. But, I mean, certainly I see how much in early-stage AI bots’ people are developing actual relationships with these things, particularly young people. And we as humans need communities and families and parents that care about us and take care about us to become social adaptable animals.

And when that's happening through artificial intelligence that not only doesn't care about us, but also doesn't have human beings as a principal interest, principle interest is the business model and the human beings are very much subsidiary and not necessarily aligned, that creates a lot of dysfunction. I fear that a level of dehumanization that could come very, very quickly, especially for young people through addictions and antisocial relationships with AI, which we'll then try to fix through AI bots that can do therapy, is a direction that we really don't want to head on this planet. We will be doing real-time experimentation on human beings. And we never do that with a new GMO food. We never do that with a new vaccine, even when we're facing a pandemic. We shouldn't be doing that with our brains, with our persons, with our souls. And I hope that that gets addressed real fast.

So anyway, that's a little bit for me and the geopolitics of AI, something I'm writing about, thinking about a lot these days. And I hope everyone's well, and I'll talk to you all real soon.

Keep reading...Show less

More from Economy

The Gulf rift gets ugly

May 14, 2026

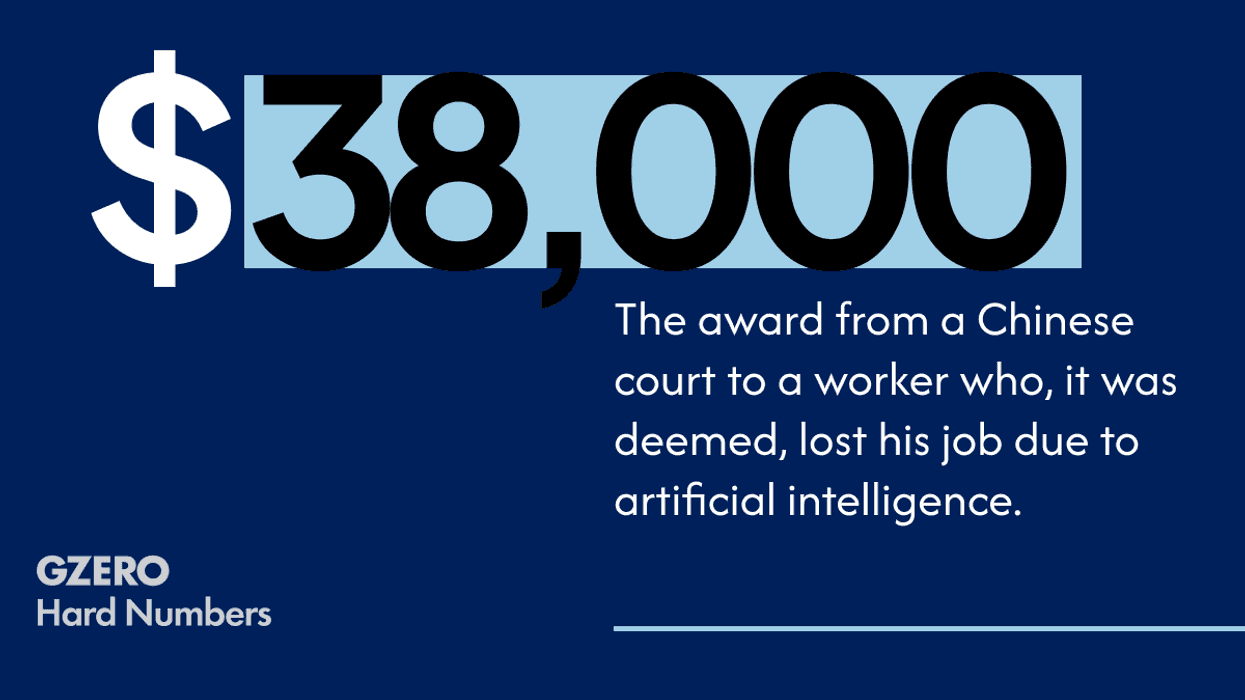

Chinese court compensates AI-replaced worker

May 14, 2026

What to watch for at the Trump-Xi summit

May 13, 2026

The momentum behind women’s sports

May 13, 2026

The Anthropic-Pentagon fallout, explained

May 13, 2026

What’s Good Wednesday: May 13, 2026

May 13, 2026

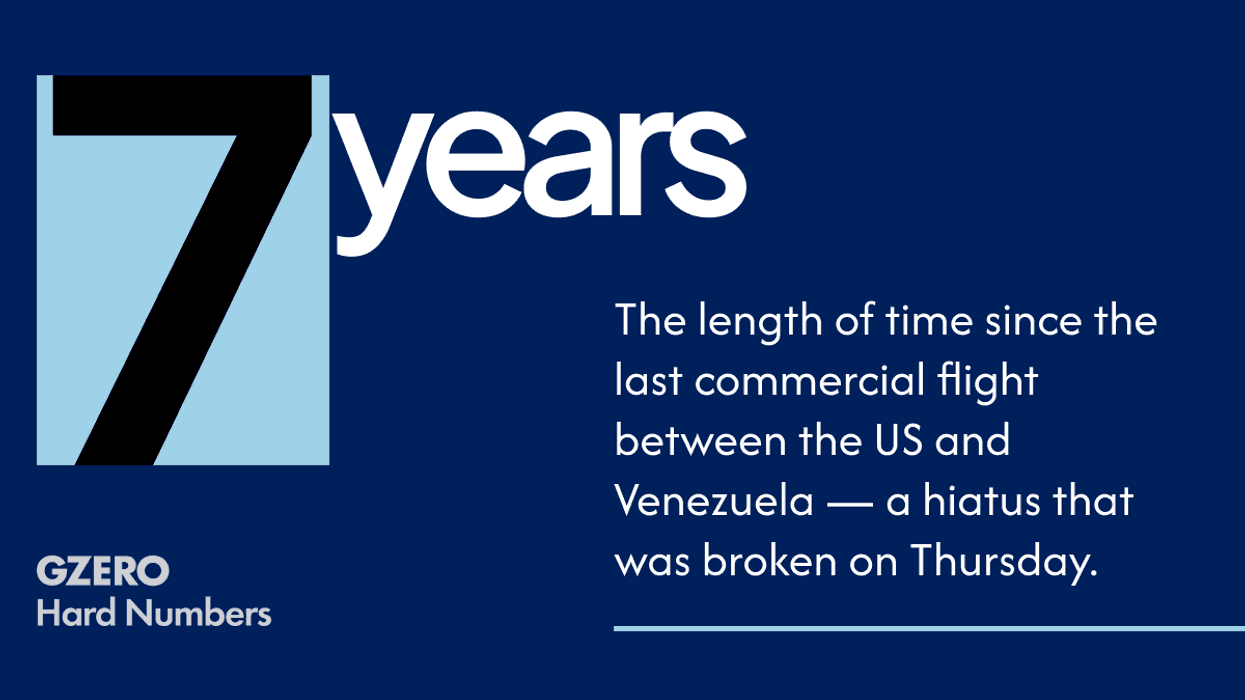

Did the US actually stabilize Venezuela?

May 12, 2026

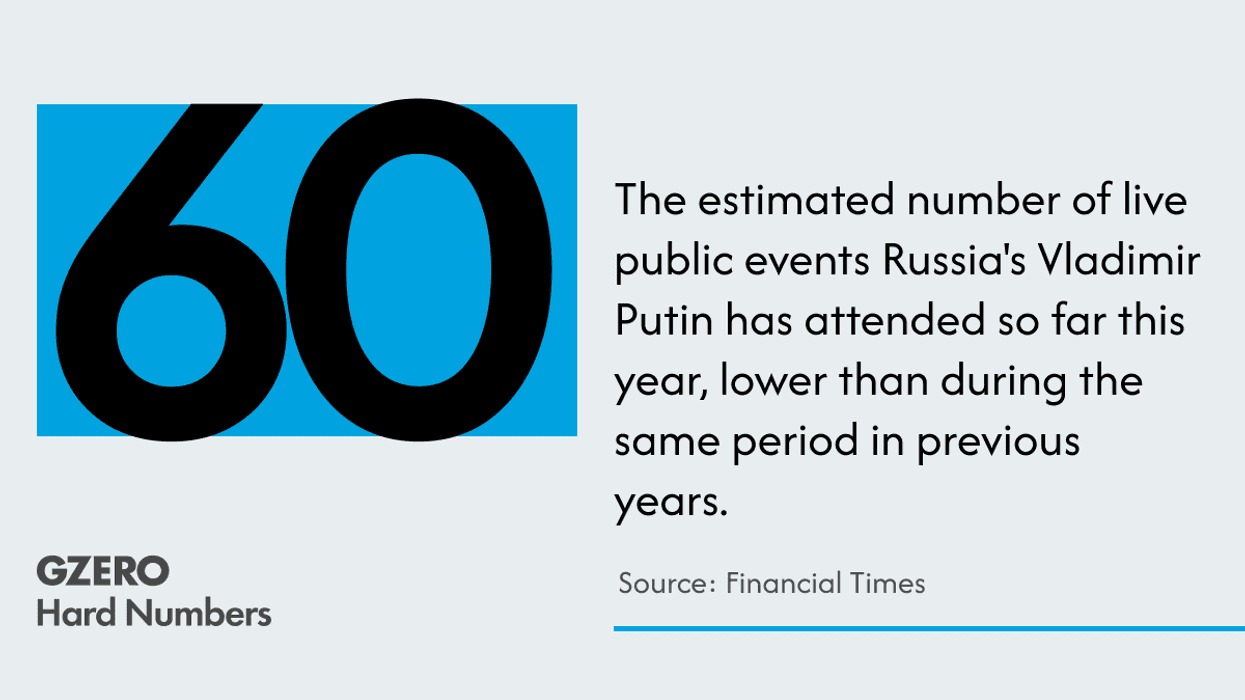

Hard Number: Is Russia stuck in the mud?

May 12, 2026

Can France redefine its relationship with Africa?

May 12, 2026

Iran thinks it has more leverage than Trump

May 11, 2026

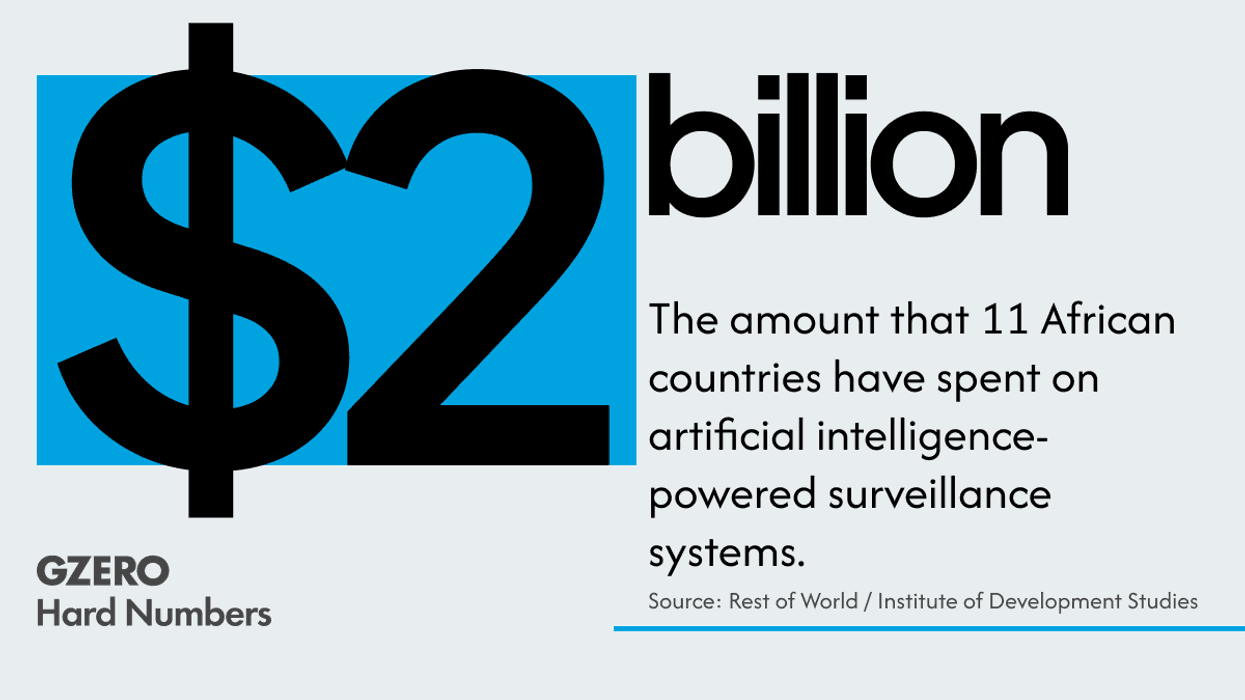

The state of global AI diffusion in 2026

May 11, 2026

GZD 5/11/26

May 11, 2026

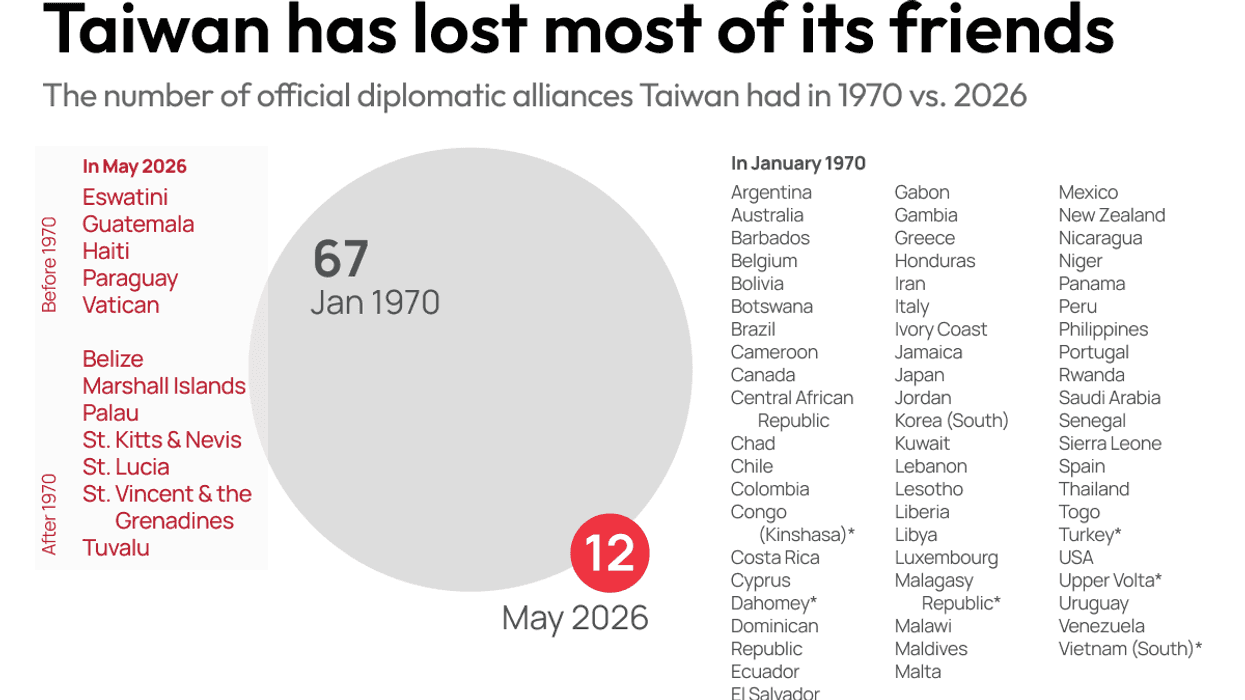

China puts Taiwan on an island

May 11, 2026

Hard Number: US eyes Cuba, literally

May 11, 2026

Is UK PM Keir Starmer finished?

May 11, 2026

The Regime in the Wild, for Sir David Attenborough

May 11, 2026

Inside the Pentagon's AI war machine

May 11, 2026

How AI is transforming the US military

May 08, 2026

US labor market holds steady despite Iran war

May 08, 2026

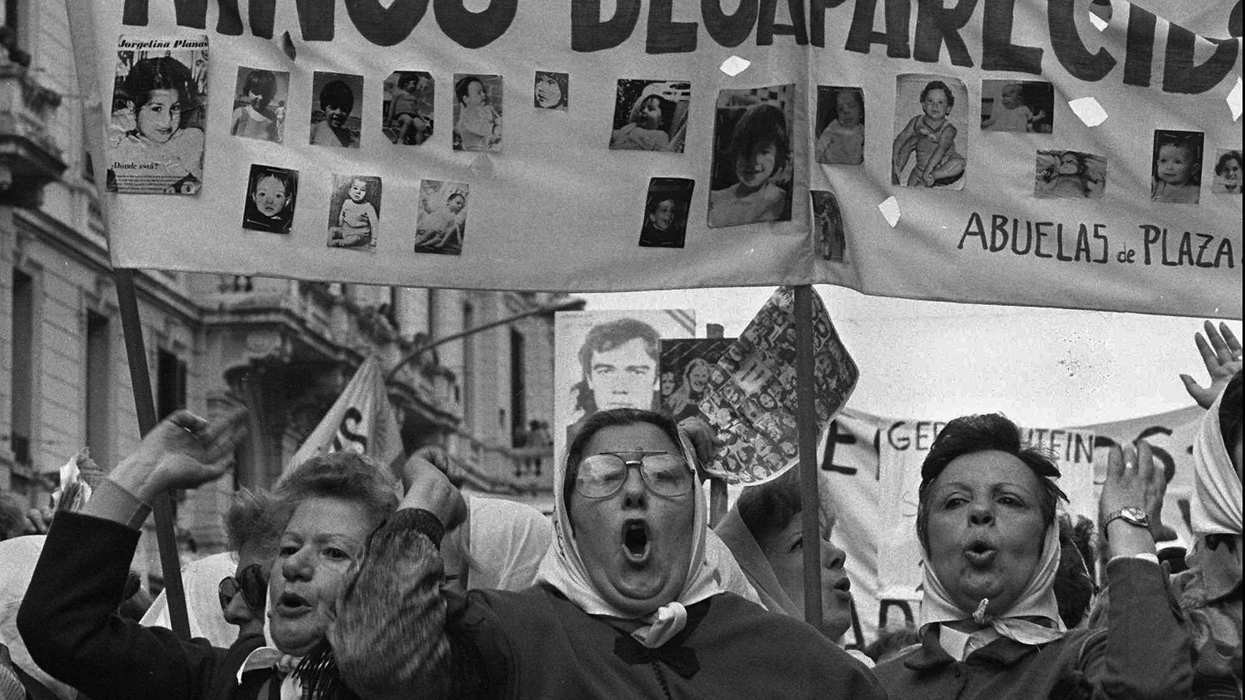

When mothers shook the world

May 08, 2026

You vs. the News: A Weekly News Quiz - May 8, 2026

May 07, 2026

Will Trump actually try to "take" Cuba?

May 07, 2026

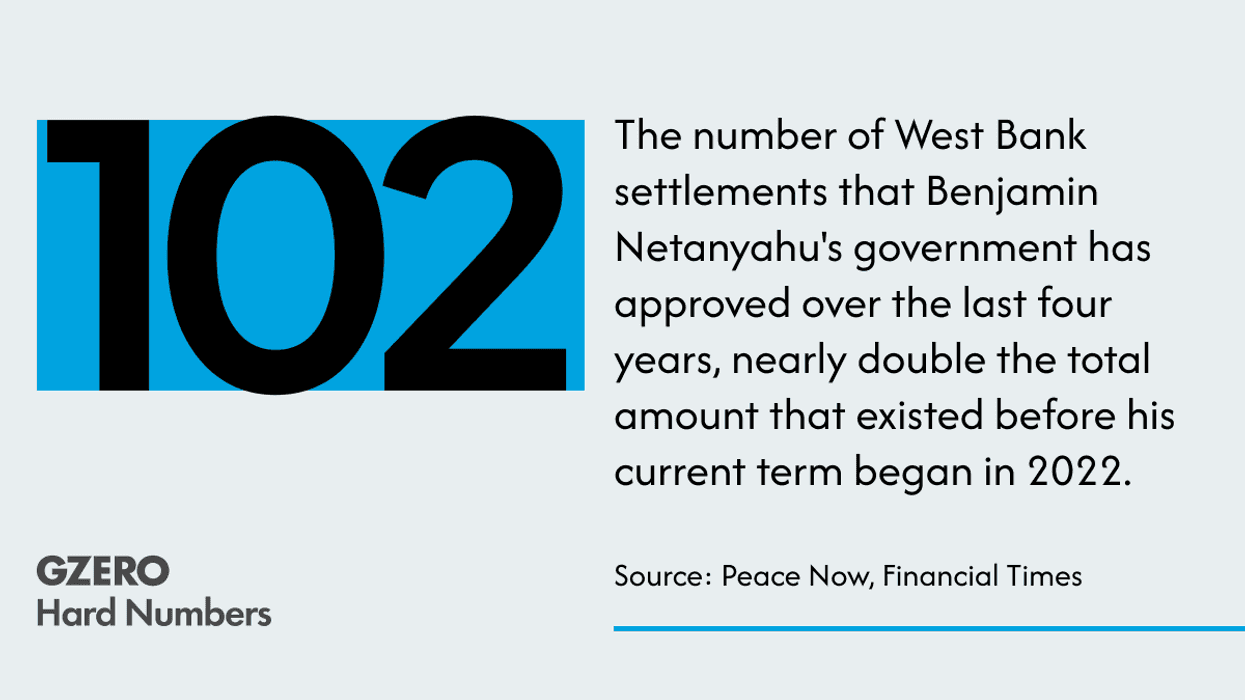

Record Israeli settlements in the West Bank

May 07, 2026

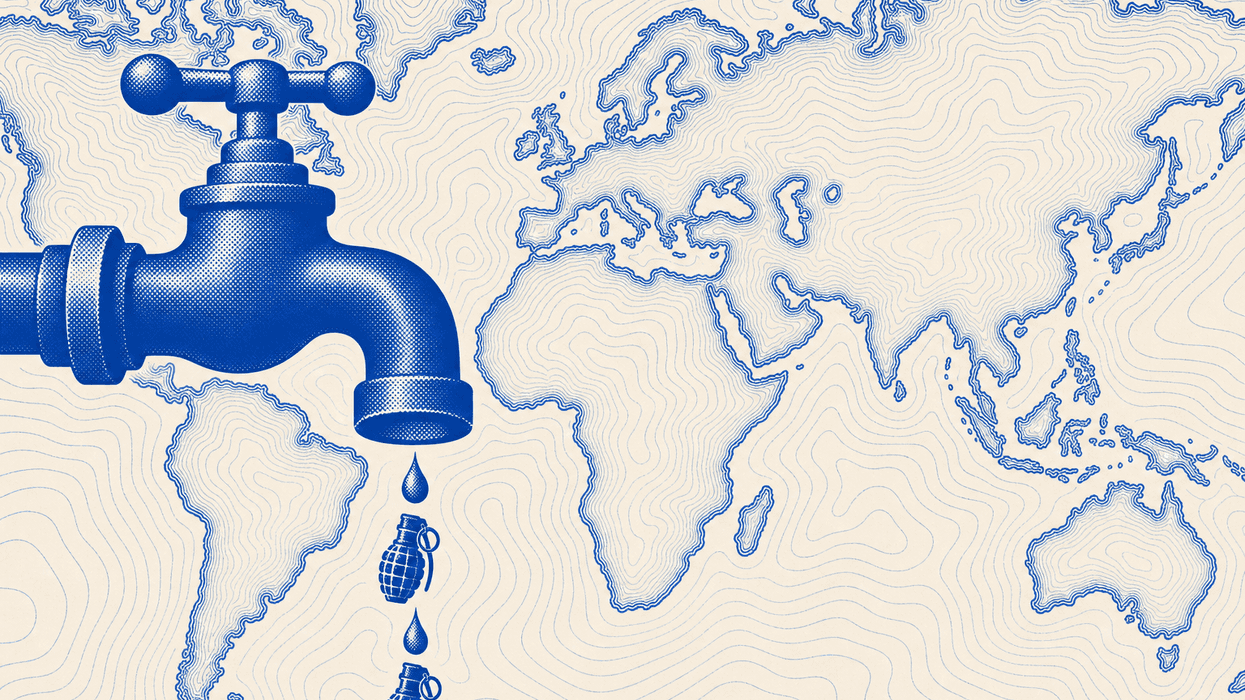

Is water the next geopolitical battle?

May 07, 2026

The next era of mobility

May 06, 2026

Why Trump can't end the Iran war on his terms

May 06, 2026

What’s Good Wednesdays™, May 6, 2026

May 06, 2026

The media's trust problem

May 06, 2026

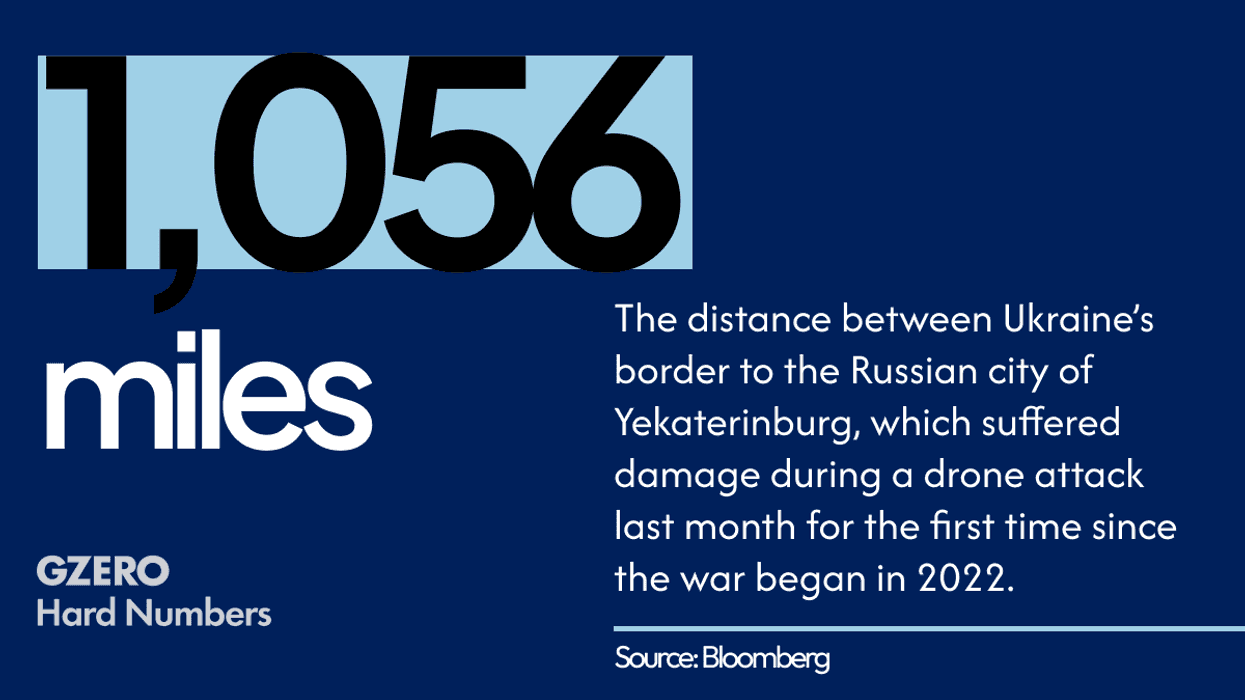

Ukrainian drones go the distance

May 06, 2026

GZD 5/5/26

May 05, 2026

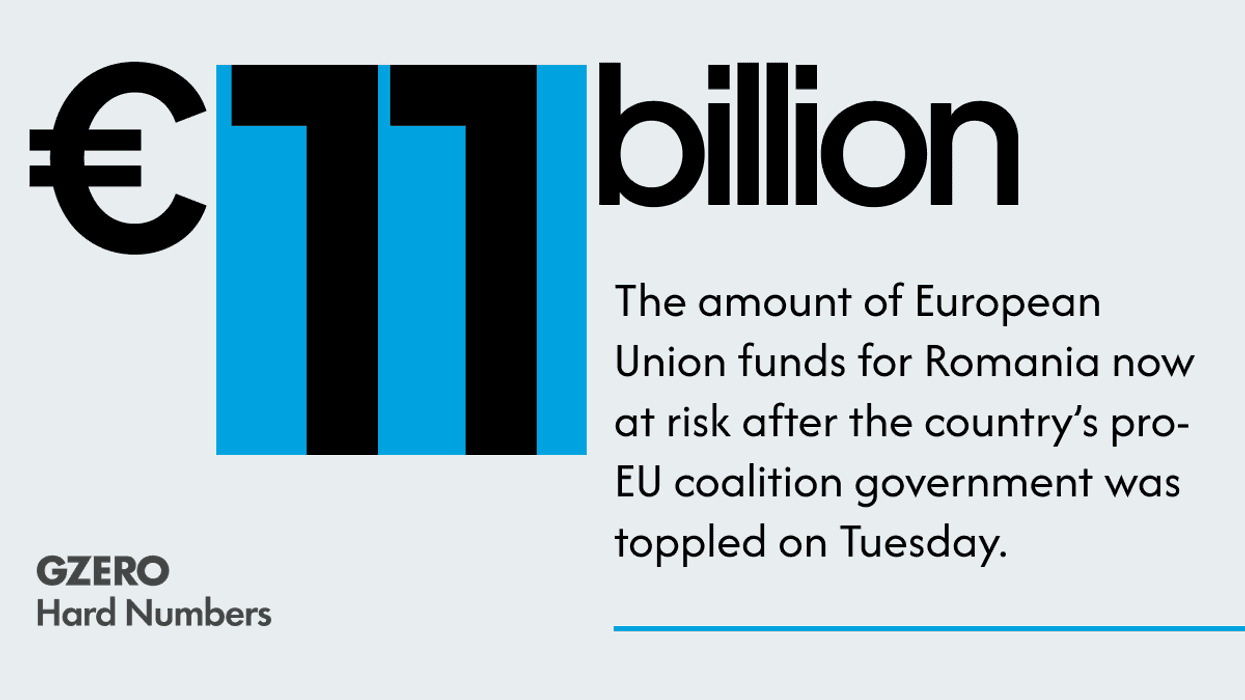

Romania’s government collapses

May 05, 2026

Trump's 'Project Freedom'

May 04, 2026

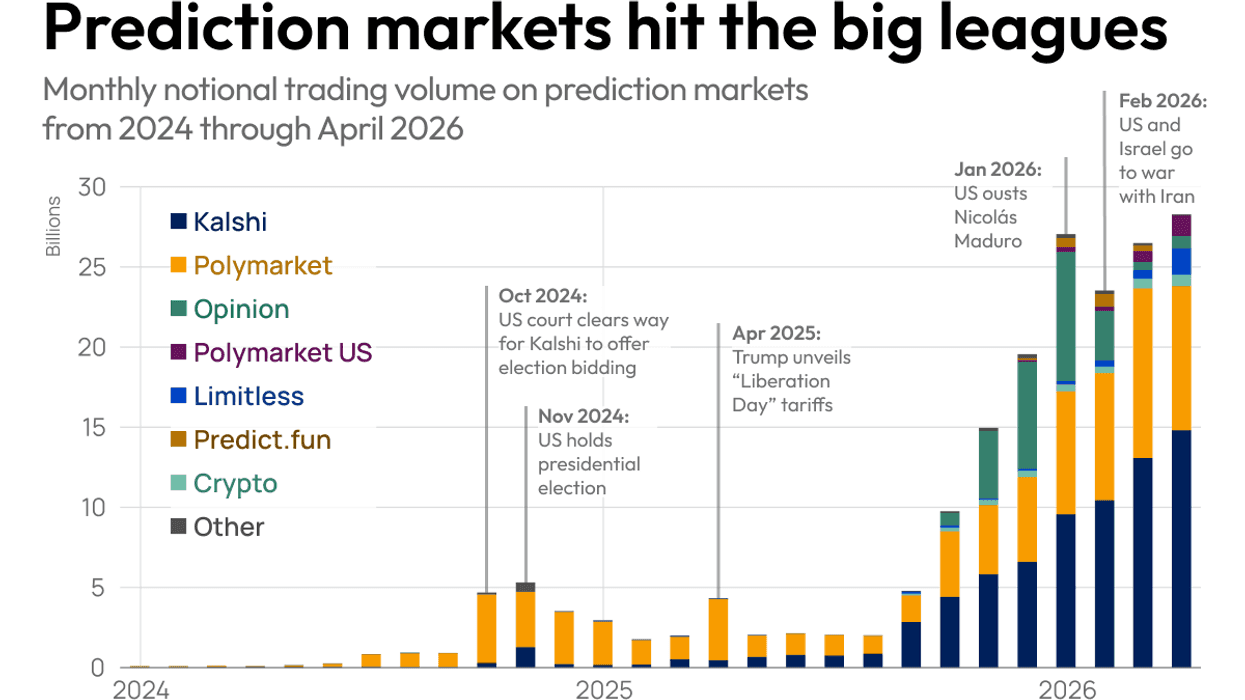

The explosion of prediction markets

May 04, 2026

Putin's paranoia

May 04, 2026

How to prepare the global economy for the age of AI

May 04, 2026

New Trump acronym on Wall Street, muchachos...

May 04, 2026

What Hungary's new leader really wants

May 01, 2026

Jet-setting to Caracas

May 01, 2026

You vs. the News: A Weekly News Quiz - May 1, 2026

April 30, 2026

Taiwan in the crosshairs

April 30, 2026

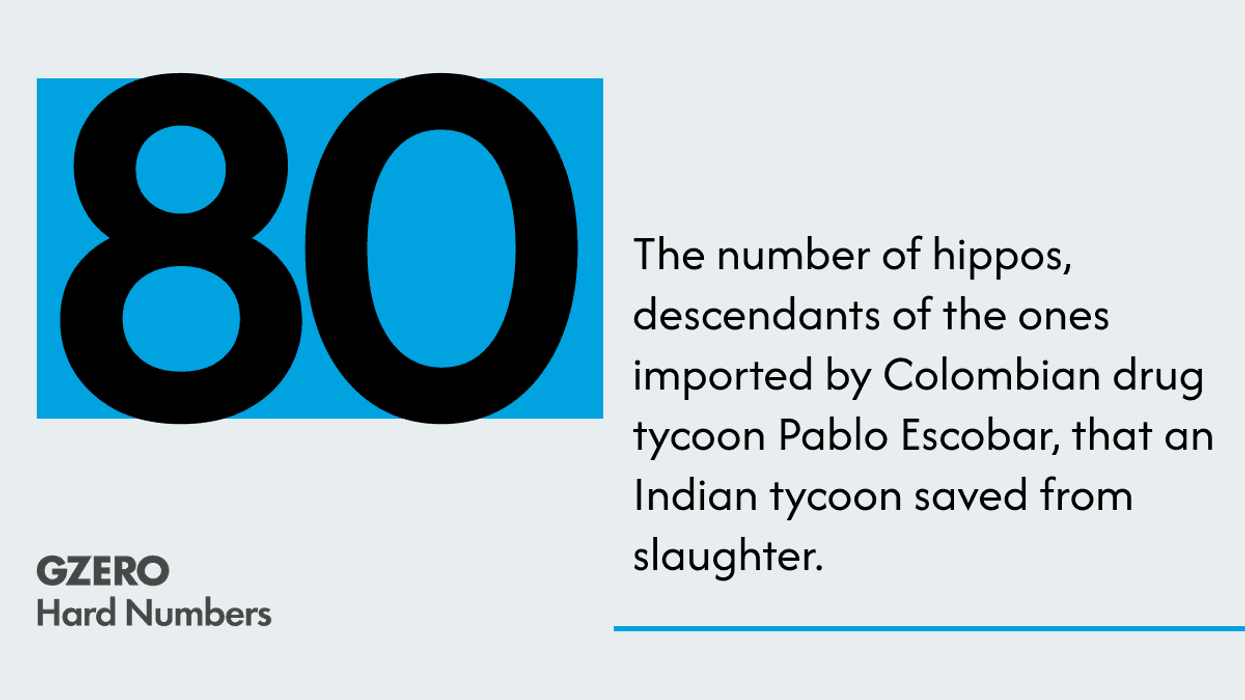

Descendants of Escobar’s hippos live to see another day

April 30, 2026

Ian 4/29/26

April 29, 2026

The world hedges its bets on America

April 29, 2026

The rise of robotics

April 29, 2026

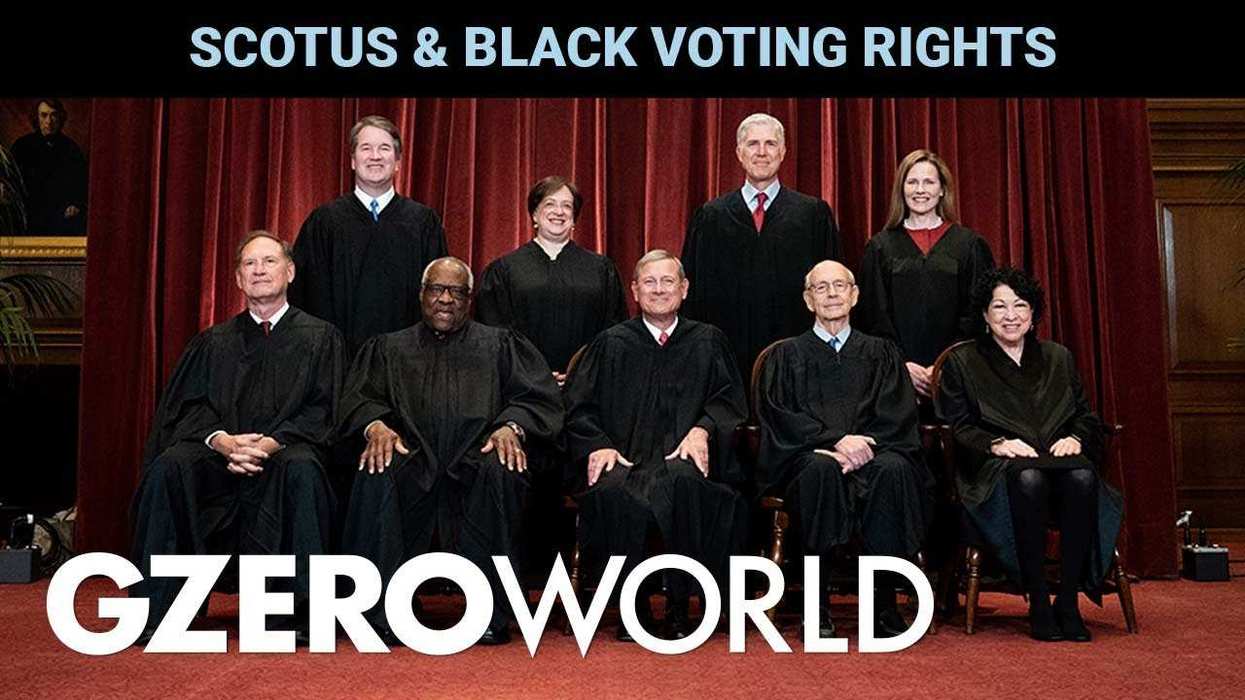

US Supreme Court guts pillar of Voting Rights Act

April 29, 2026

What’s Good Wednesdays™, April 29, 2026

April 29, 2026

GZD 4/29/26

April 29, 2026

Hard number: Trouble in wine country

April 29, 2026

Advancing sustainability through packaging innovation

April 28, 2026

UAE to withdraw from OPEC

April 28, 2026

Hard number: A superyacht gets through Hormuz

April 28, 2026

Can the King be the UK’s Trump card?

April 28, 2026

Trump's Cuba backlash could come from home

April 28, 2026

US-Iran peace talks stall

April 27, 2026

Why Cuba won't be the next Venezuela

April 27, 2026

GZD 4/27/26

April 27, 2026

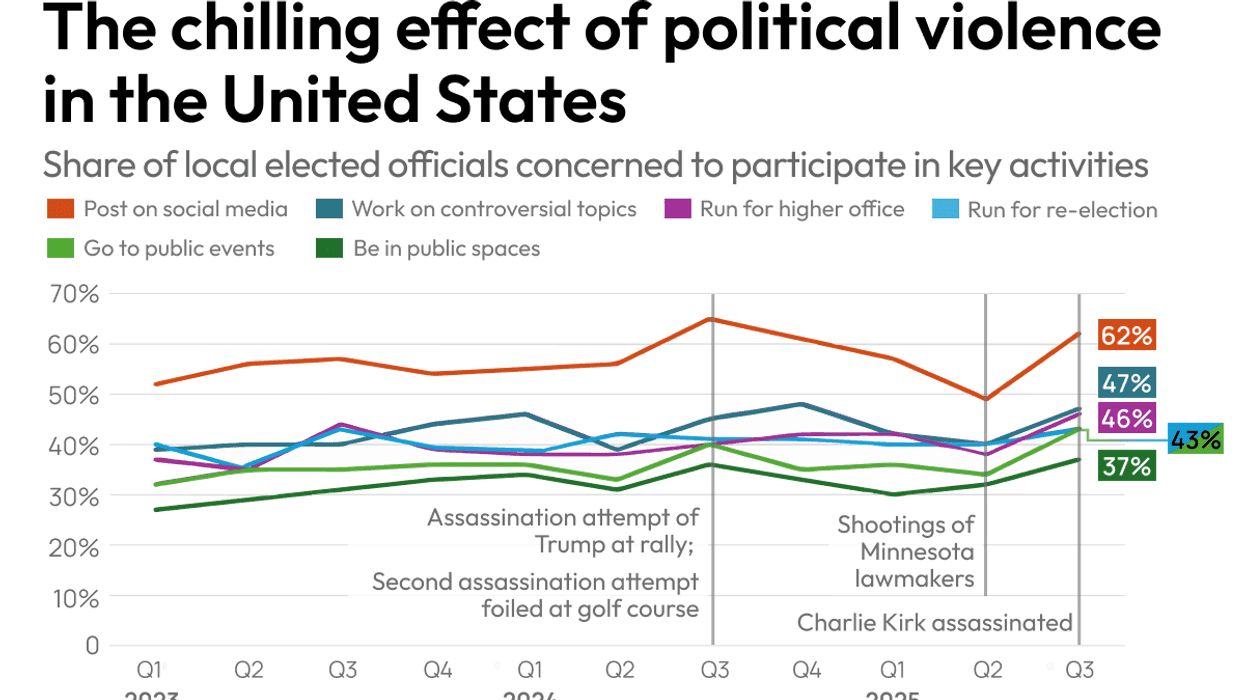

Violence creates an environment of fear in US politics

April 27, 2026

Hard Number: Black Republican exodus from the US House

April 27, 2026

Cuba on the brink

April 27, 2026

The Strait of Hormuz helpline

April 24, 2026

Will Trump actually try to "take" Cuba?

April 24, 2026

GZERO Series

GZERO World with Ian Bremmer

Puppet Regime

Quick Take

ask ian

Ian Explains

GZERO Reports

GZERO Europe

The Debrief

GZERO Daily: our free newsletter about global politics

Keep up with what’s going on around the world - and why it matters.